I think we use Haxe as connector to python(blender) and C++(OpenPose).

I don’t know how to do it though.

Or write OpenPose in Haxe kha from scratch.

Continuing the discussion from Procedural animation and Motion matching:

I think it would be great if we could get some kind of motion capture system into Blender that we could use for our Armory games. I’ve recently found some Machine Learning based solutions for recognizing human poses from single images or videos, which could make for an extremely economic solution for motion capture without expensive equipment or setup.

- TensorFlow.js demo ( from TensorFlow.js website )

-

OpenPose – And somebody is working on a blender plugin: motion-capture-in-blender-via-openpose ( not sure if it works

)

)

Both of these could be a potential candidate for creating a Blender integration for motion capture. OpenPose definitely seems to be more robust, but TensorFlow.js is easier to get started with and it might be easier to hack and tweak because of that. To bulid a 3D representation of a pose you probably need multiple images, but you might also be able to leverage the 2D pose to provide an estimated 3D pose ( I don’t know if that is possible or not ).

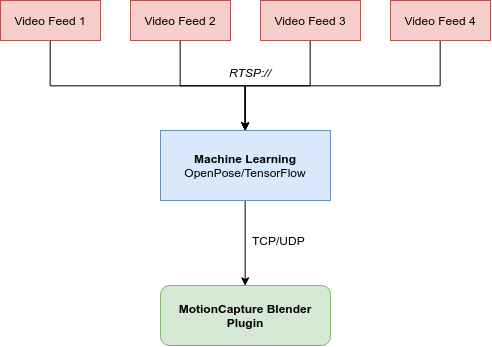

Here is the basic architecture I was thinking:

I don’t think most lonewolf indies will spend time making animation capture.

- There is lot of free animation capture files, without needing you to capture animations.

- Procedural animation becomes useful, with some poses it generates the whole animation.

I hope that this article will show you that we have already all the preliminaries bricks to do the job, that is video on YouTube, motion capture and Atrap.

Procedural animation uses physics to determine animations, right? It would be really cool to see something for that in Armory.

What’s cool is that none of this stuff we are discussing has to be built-in to Armory at first. We can create standalone libraries and try stuff out, then, when it is working, we can add it to Armory core.

Seems too easy to be real.

We could make thousand libraries of animations without needing motion capture.

As you know the context is that Deep learning has dramatically advanced the state of the art like in vision, speech, security, finance… while with mocap we have a very popular source of demonstrations for creating references for motions. The capability that Armory offers today is to integrate some of those state-of-the-art existing bricks, that could be replaced/improved then as time goes by.

First steps could be for us the bricks Reinforcement Learning (actions, state transition and rewards), OpenPose (on video like Youtube), and Armory IK and animation tools. Those preliminary bricks exist at different level of maturity and we could envisage to use them with the objective, perhaps utopian but exciting, of the creation of an Armory tool for Animation with pose estimation.

You give a starting position, a scene environment and a final expected position and it manages to offer you an animation that is credible and results as close as possible to your final given target.

For example, rewards/policy of the RL could be based on quaternion differences of a simulated Armory skeleton’s joint rotations with IK and those of the reference motion obtained by OpenPose with video.

The tool should stay in Blender, not become Armory integration.

Armory is the 3D engine, Blender is all modeling and animation tools.

About OpenPose, Armory doesn’t need to know it exists or is animations are from OpenPose, Armory only needs the animations actions to work with.

Armory IK or new animation tools are indeed Armory related, not Blender.

I think Armory needs to focus first on getting lot later a stable full release.

Advanced motion features and tools, machine learning; those doesn’t matter when there is lot of issues elsewhere.

Outside of IK other advanced features should not be a priority, at least Lubos should not work on them.

Getting Armory able to make a complete game, without graphics or physics bugs is the more important first.

Reasonable @MagicLord if you are sure that Lubos is alone and not too ambitious for Armory.

I agree with @MagicLord, what he is trying to say is lubos is the key developer and we contribute around. We can’t code that big project(OpenPose in Blender), atleast not me, if you can do that then you are welcome too. Lubos is like 10x developer, he can code almost anything he wanted to. what @MagicLord say is that lubos who is 10x, should focus on engine first (Graphics, Physics, etc) and not on OpenPose which isn’t needed NOW and should not be considered as top periority. Don’t get me wrong, OpenPose is amazing but focusing on fixing the core is more important.

For OpenPose you will need four camera. You will have to take video with four camera and then feed it to deep learning. I can’t think using four camera in real time is possible.

Open Pose is lot more complicated than expected with four cameras, i don’t think the average indie will buy or mess up with four cameras.

Let’s keep this and motion matching for lot more later

You definitely have a point to a certain degree. Lubos doesn’t need to be working on this right now. But when you have people like @Didier who are at home with machine learning and have the ambition to dream big for Armory, there isn’t anything that should stop him from contributing potentially awesome stuff that he is able to work on.

I plan on contributing to Armory myself and I really have big hopes and expectations for it, but I can’t work on low level Graphics and all kinds of crazy stuff that Lubos is doing right now. But I might be able to help with a cool machine learning integration with Blender for motion capture. We’re not asking everybody who is working on core stuff to stop what they are doing and help us, but if you are interested and have the time and ability, what is to stop us from trying to make Armory more awesome.

While all this motion capture stuff would be cool, I agree it is a blender issue not an armory one. There are still a few threads out there that can’t get any animation working right. I still haven’t been able too ( although I haven’t tried in the last month or so). Unless you guys are talking about implementing something for dance games and the like, shouldnt a solid use of animations be a much higher priority?

Yeah, that makes sense. So far I’ve been able to get the animations working in my tests recently, and there are some examples like the third person shooter template that has it working so I was trying to figure out if I could get a capture system setup because I had some brainstorming time.

It is also true that motion capture is more of a Blender concern than Armory if you are not doing realtime IK or procedural animation.

I thin the best way to approach the problem is to use free mocap files from ( mixamo etc) and the we can use AI (ATRAP) to generate the needed animation here some exmples https://www.youtube.com/watch?v=vppFvq2quQ0&list=LLhHrKMq1Y9i-ctT1l7lAhxg&t=0s&index=3 https://www.youtube.com/watch?v=X6Dj4hjV86Q&list=LLhHrKMq1Y9i-ctT1l7lAhxg&t=0s&index=5

here some procedural animation https://www.youtube.com/watch?v=pgaEE27nsQw&list=LLhHrKMq1Y9i-ctT1l7lAhxg&t=0s&index=4 and also this is how armory ik should be https://www.youtube.com/watch?v=KLjTU0yKS00&list=LLhHrKMq1Y9i-ctT1l7lAhxg&t=0s&index=2

Thanks @Chris.k for all those links that I think can participate to help us to define the preliminary list of requirements … what we hope to find in Armory (and Blender) for future integrated inovative animation tools with a kind of IA generative for bones+rigging+IK+animations.

How the project is going ? Have you try atrap for motion matching etc?

@Chris.k the demonstrator of Atrap is done. Verification about the speed, accuracy, inputs from different sources, visualization, UI is ok.

As you can find in last post on AI with Atrap, I am looking to build the next version of Atrap with something that is unique with Armory, that is to introduce a kind of 3DOOP approach and you will find the first “grandes lignes” of theorical approach to do it that I discuss with @zicklag.

Maybe you could participate in defining what could be the main requirements for IA generative in Armory.

)

)